A couple of weeks ago I wrote about 4shClaw, a personal multi-agent AI assistant running with Docker. It works. Agents spin up in containers, coordinate through a shared ledger, and build a game called GLORP autonomously. The architecture proved out the core ideas: container isolation, declarative capabilities, ephemeral agents, lead-agent orchestration.

But 4shClaw is a personal tool. Single user, SQLite, filesystem IPC, Node.js host. That’s fine for me. It’s not fine for a team of five engineers at a fintech company who want the same thing on their infrastructure, with audit trails their compliance team will accept.

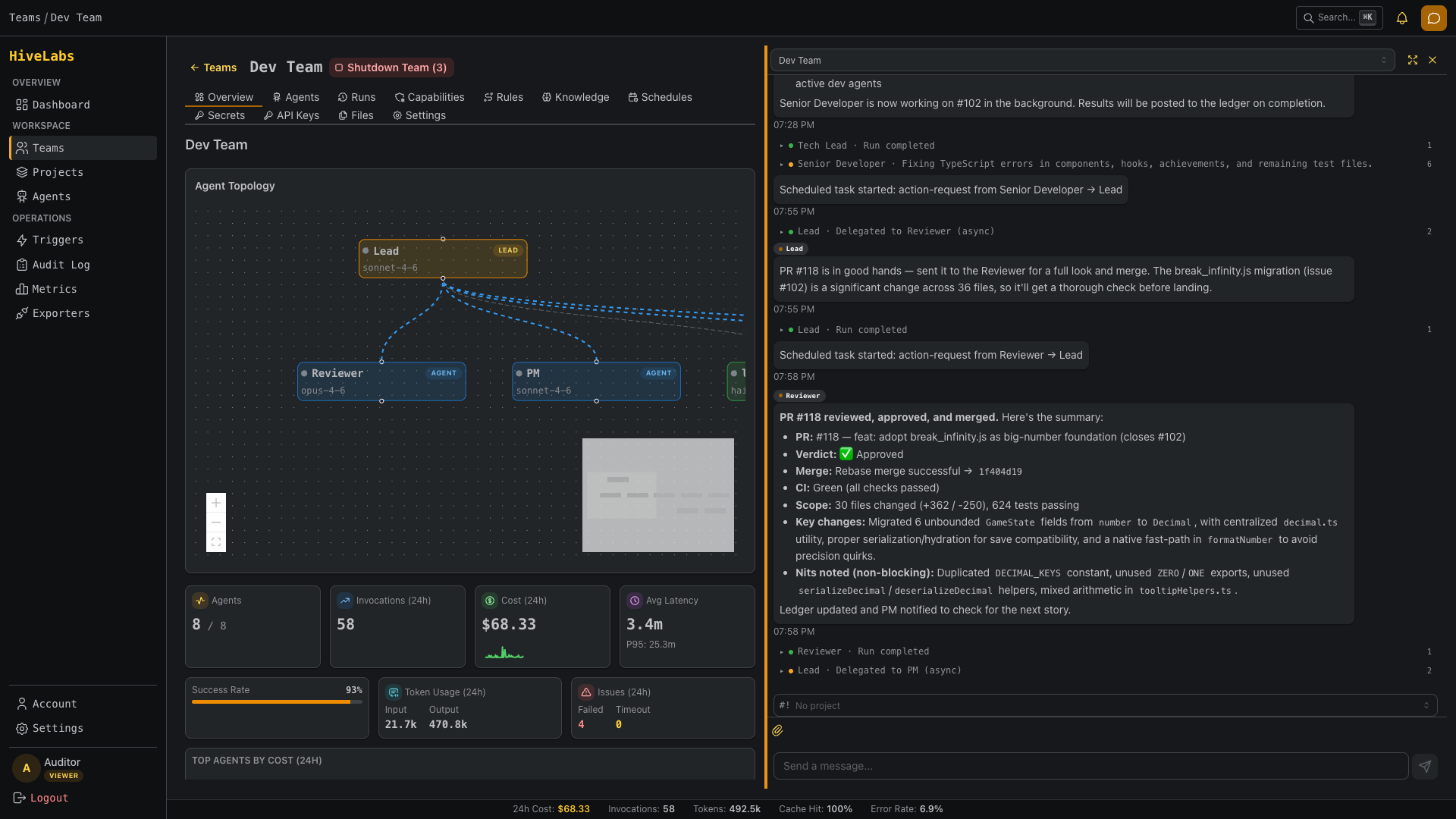

So I started building HiveLabs: the same core concepts, rebuilt from scratch for production use.

What Stays, What Changes

The mental model is identical. You have a team of agents. A lead orchestrates. Sub-agents have scoped capabilities and isolated execution environments. Memory is layered. Everything is logged.

The implementation is completely different.

| 4shClaw | HiveLabs | |

|---|---|---|

| Platform | Node.js host process | Single Rust binary (axum + tokio + sea-orm) |

| Database | SQLite | PostgreSQL 17 |

| IPC | Filesystem JSON polling | HTTP calls from container to platform API |

| Runtime | Docker only | Docker or Kubernetes |

| Agent definitions | YAML files on disk | Database rows, managed via API and web UI |

| Interface | Telegram | Web UI (React SPA) + REST API |

| Users | Single user, hardcoded | Multi-tenant with teams, RBAC, API keys |

| Observability | SQLite logs table | Structured event pipeline, OTel-compatible |

| Triggers | Cron schedules | Cron + webhooks + event-driven |

The divergence isn’t about scale for its own sake. Each change exists because 4shClaw hit a real limitation when I imagined anyone other than me using it.

Why Rust

4shClaw’s Node.js host works fine for a single user. But I kept running into situations where I wanted stronger guarantees: type safety across the entire API surface, predictable memory usage under load, compile-time enforcement of things like “every IPC call must be audited.”

Rust with axum gives me a single static binary that starts in milliseconds, uses a fraction of the memory, and catches entire categories of bugs at compile time. The type system is strict enough that if a handler compiles, the request/response shapes are correct. No runtime surprises.

The trade-off is development speed. Rust is slower to write than TypeScript. But for infrastructure code that needs to be correct and auditable, that trade-off is worth it. The agent runners inside containers are still TypeScript (the Claude Agent SDK is TypeScript), so it’s not like I’m fighting the ecosystem.

SeaORM handles database access. utoipa generates the OpenAPI spec from code annotations. The frontend types are auto-generated from that spec. One source of truth, compile-time checked, all the way from the database to the React component.

The IPC Problem

4shClaw’s filesystem-based IPC was elegant in its simplicity. Agent writes a JSON file, host polls, host writes a response file. Easy to debug, easy to audit, zero network surface.

It also doesn’t work across machines.

In Kubernetes, your agent pod might be on node A while the platform server runs on node B. There’s no shared filesystem. You could use a PersistentVolumeClaim, but now you’re polling a networked filesystem, which is slower and more fragile than just making an HTTP call.

HiveLabs uses HTTP for IPC. The agent container still has an MCP server providing the same tools: send_message, remember, recall, report_to_ledger, delegate_to_agent. But instead of writing JSON files, the MCP server makes authenticated HTTP calls to the platform’s internal API. The agent gets a one-time run token at spawn time, scoped to that specific invocation.

From Claude’s perspective inside the container, nothing changed. It calls the same MCP tools. The transport underneath is different, but the agent doesn’t know or care.

The audit story is actually better now. Every IPC call is an HTTP request that hits the platform’s middleware, which means structured logging, request tracing, and rate limiting come for free. With filesystem polling, I had to build all of that manually.

Runtime Backends

4shClaw uses Docker exclusively. docker run, docker exec, docker logs. Simple, direct, works on any machine with Docker installed.

HiveLabs abstracts this behind a RuntimeBackend trait with two implementations: DockerBackend (using bollard) for single-server deployments and local dev, and K8sBackend (using kube-rs) for production Kubernetes clusters. The platform picks one at startup based on configuration.

The Docker backend does what 4shClaw does: docker create, inject env vars, start, stream logs, clean up on exit. The K8s backend creates pods with proper resource limits, network policies, service accounts, and ephemeral volumes. Same agent, same runner image, different orchestration.

This isn’t theoretical future work. The Docker backend is fully functional. The K8s backend is planned for Phase 7. But the trait boundary means adding it requires zero changes to the orchestrator, the API, or the agent runners.

Multi-Tenancy

4shClaw has one user. My Telegram ID is basically hardcoded. Memories are tagged with a user scope, but there’s no real auth system.

HiveLabs has users, teams, API keys, and role-based access control from day one. Not because I need it today, but because retrofitting multi-tenancy is one of those architectural decisions that’s ten times harder to add later than to build in from the start.

Teams are the organizational boundary. Each team has:

- A lead agent (auto-created, one per team, cannot be deleted)

- Agents with different capabilities and prompts

- An optional lorekeeper for memory management

- A shared ledger

- Isolated memory namespaces

Users authenticate via JWT or API keys. API keys are scoped to teams. Every request is authorized against the team boundary. An agent in Team A cannot access Team B’s memories, ledger, or agents.

PostgreSQL’s row-level security handles the data isolation. The application layer enforces it too, but having it at the database level means a bug in the API code can’t accidentally leak data across teams.

Agent Roles

4shClaw had a loose role system: “lead”, “sub-agent”, and the lorekeeper was just a sub-agent with special capabilities. HiveLabs formalizes this into exactly three roles:

Lead is the entrypoint. One per team, auto-created. All user requests that aren’t addressed to a specific agent go through the lead. It orchestrates, delegates, and maintains awareness through the shared ledger. Same concept as 4shClaw, just enforced at the database level with a constraint.

Agent is the working force. Different agents have different prompts and capabilities. Some may have the delegate capability, making them sub-orchestrators for specific workflows. The PM, developer, and reviewer from 4shClaw would all be “agent” role in HiveLabs.

Lorekeeper handles memory consolidation. Independent from the team hierarchy, max one per team. Runs on a schedule, deduplicates memories, distills knowledge. Same job as in 4shClaw, just with a proper role definition and database constraints.

A dev team in HiveLabs: Lead, Developer, PM, Reviewer, Senior Developer, Tech Lead, Designer, and Lorekeeper. Each with its own model, runner, and capabilities.

No “reviewer” role, no “PM” role, no “scout” role. Those are behavioral differences defined by the agent’s prompt and capabilities, not structural roles. The platform doesn’t need to know that an agent is a PM. It needs to know whether it’s a lead, a worker, or the memory manager.

The Web UI

4shClaw uses Telegram as its interface. That works surprisingly well for a personal assistant: quick messages, push notifications, conversation history. But it’s terrible for anything visual. Viewing agent logs, managing configurations, browsing audit trails, none of that fits in a chat interface.

HiveLabs ships a web UI as a React SPA embedded directly in the Rust binary via rust-embed. One binary, one port, serves both the API and the frontend. No separate frontend deployment, no CORS configuration, no nginx reverse proxy.

The UI covers what Telegram can’t: agent management (create, edit, configure capabilities), team dashboards, run history with full logs, audit trail search, and a chat interface for talking to your team’s lead agent.

The frontend types are auto-generated from the OpenAPI spec that utoipa produces from the Rust handler annotations. Change a DTO in Rust, run make openapi-all, and the TypeScript types update automatically. No manual type synchronization, no drift.

Observability

4shClaw logs everything to a SQLite table. That’s fine for debugging. It’s not fine for a production system where you need alerting, dashboards, and integration with existing monitoring infrastructure.

HiveLabs has a structured event pipeline. Every agent action (spawn, IPC call, tool use, completion) emits a typed event. These events flow through an internal bus to multiple consumers: the audit log (PostgreSQL, always on, non-negotiable), an OpenTelemetry exporter for traces and metrics, and pluggable backends for LangFuse or LangSmith if you want LLM-specific observability.

The audit log: every IPC call, agent spawn, and tool invocation with timestamps, event types, and full context.

The audit log is the one thing I’m most opinionated about. If you’re running AI agents on regulated infrastructure, you need to answer “what did agent X do at time Y and why?” That’s not optional. Every IPC call, every container spawn, every tool invocation is logged with the full context: who triggered it, what the input was, what the output was, how long it took.

4shClaw proved the concepts work; HiveLabs is making them work for everyone else.